Experimental psychologists often deceive their participants about certain parts of their studies. They may provide false cover stories, simulate interactions with other people who are actually computer programs, or give them fake feedback on an intelligence test. Researchers who employ deception also often include a 'post-experimental inquiry', designed to suss out whether participants detected the deceptive elements of the study and/or guessed the study's true purposes. These are often referred to as suspicion probes and can take many forms.

Experimentalists are often trained that suspicion probes and the exclusion of suspicious participants is necessary to doing valid experimental research. Doctoral students read quotes like "It is impossible to overstate the importance of the post-experimental follow - up. . . .the experimenter needs to learn if the deception was effective or if the participant was suspicious in a way that could invalidate the data based on his or her performance in the experiment."

Yet might this approach of identifying and excluding 'suspicious' participants actually cause problems? I argue that it might.

In a survey of 77 social psychologists, over 97% of experimental social psychologists who employ deception also included a suspicion probe. Of these investigators, 84% administered the probe prior to debriefing, which is likely motivated by a desire to assess suspicion before participants are given the full information about the study; 10% administered probe before and after the debriefing. The probes took the form of verbal interviews between experimenters and participants (57%), computerized surveys (23%), and/or paper-and-pencil surveys (21%). The number and content of the questions in these interviews/surveys varied wildly, with no standardization across groups. When participants met the researchers' (unstandardized) criteria for being 'suspicious', 58% discarded these participants, whereas 27% included these suspicion ratings as a statistical covariate in their analyses.

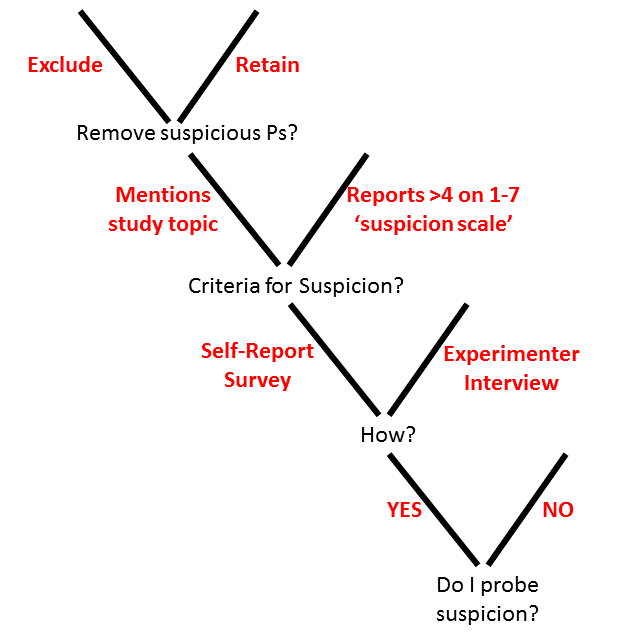

As you can see from this survey, suspicion probing is a wild and lawless frontier that enables researcher degrees-of-freedom as investigators make undisclosed and/or unjustified decisions along the garden of forking paths.

Experimentalists are often trained that suspicion probes and the exclusion of suspicious participants is necessary to doing valid experimental research. Doctoral students read quotes like "It is impossible to overstate the importance of the post-experimental follow - up. . . .the experimenter needs to learn if the deception was effective or if the participant was suspicious in a way that could invalidate the data based on his or her performance in the experiment."

Yet might this approach of identifying and excluding 'suspicious' participants actually cause problems? I argue that it might.

In a survey of 77 social psychologists, over 97% of experimental social psychologists who employ deception also included a suspicion probe. Of these investigators, 84% administered the probe prior to debriefing, which is likely motivated by a desire to assess suspicion before participants are given the full information about the study; 10% administered probe before and after the debriefing. The probes took the form of verbal interviews between experimenters and participants (57%), computerized surveys (23%), and/or paper-and-pencil surveys (21%). The number and content of the questions in these interviews/surveys varied wildly, with no standardization across groups. When participants met the researchers' (unstandardized) criteria for being 'suspicious', 58% discarded these participants, whereas 27% included these suspicion ratings as a statistical covariate in their analyses.

As you can see from this survey, suspicion probing is a wild and lawless frontier that enables researcher degrees-of-freedom as investigators make undisclosed and/or unjustified decisions along the garden of forking paths.

As outlined above, suspicion probes are often unstandardized and largely unvalidated measures that are idiosyncratic to a given laboratory or investigator.

Even when experimenters intentionally and covertly delivered deception-related information about the study to participants, these participants didn't reveal that information during a subsequent suspicion probe. And this finding is repeatedly replicated even when participants are rewarded for reporting their suspicions. This suggests that suspicion probes do not reliably detect suspicion even when it does exist and are thus, not valid.

Trained experimenters are also unable to reliably distinguish between participants who have and have not received prior information about the deceptive elements of the study, another piece of evidence that suspicion probes are invalid.

Such probes often rely on qualitative responses to experimenter interview questions, which can introduce experimenter biases into the procedure, as the experimenter's subjective interpretation of a qualitative response will impact their ultimate determination of whether a participant was suspicious or not.

Absent a standardized protocol, experimenters can also ask questions in different ways that lead participants towards a given response, which is another form of potential bias. Further, participants may express suspicion due to other motivations. They may be trying to avoid seeming like rubes who were fooled by the experimenters or are looking to cause problems for the people who just put them through a boring and/or distressing experiment.

Without valid and standardized probing procedures, the categorization of participants into 'suspicious' and 'non-suspicious' categories may not reflect a meaningful distinction that maps onto the intended construct. Even if they are valid, probes will always entail some degree of measurement error, which can have negative consequences for trying to accurately and reliably assess suspicion. However, even if such standardization and validation of suspicion probes takes place, it still leaves uncertainty regarding what to with 'suspicious' participants.

Should 'Suspicious Participants' Be Excluded From Data Analysis?

'Suspicious' participants are often excluded from data analyses, either by casewise deletion or statistical covariance. This practice is motivated by the assumption that suspicious participants provide inaccurate data about the study's hypotheses. But to what extent is this assumption based in fact?

Let's take a look with a specific case-study: studies that simulate real people with computerized avatars. Many studies tell participants that they are interacting with real people on a given task, when in fact they are interacting with pre-programmed computer avatars. Participants are often excluded if they indicate that they were suspicious during the study. However, studies suggest that people interact with avatars that they know are computer programs in similar ways to avatars they believe are actual people. So this is one case where the fears of 'contaminating' the analyses with the inclusion of suspicious participants wouldn't be warranted.

Even if participants are aware of your deception, there isn't any evidence (that I could find) that establishes a systematic way in which *including* such 'suspicious' participants would bias your results. Indeed, demand characteristics and the suspicion thereof (however it is defined) will likely have heterogeneous impacts on your different participants. Some participants may respond by trying to 'help' the experimenters and confirm the predictions they have guessed, some may actively seek to undermine the study, and still others may respond in random or erratic ways having lost faith that this study is a worthwhile endeavor. As such, this source of noise/error in your data should be roughly similar in structure to that introduced by participants exhibiting varying levels of understanding the experimenter's instructions, or actively engaging with and attending to the research task at hand. Investigators rarely screen for and remove participants for these variables, so why be so selective regarding suspicion?

Conversely, *excluding* your suspicious participants is likely to bias your resulting findings in meaningful ways. Recently, researchers have shown that excluding participants who fail attention checks eliminates non-random groups of participants from datasets, which undermines the validity and generalizability of the results. The same goes for suspicion probes, which likely eliminate individuals who tend to be more generally-suspicious, analytic, and intelligent. Thus, excluding 'suspicious' participants trades one problem (including participants who guessed your deception) with another one (eliminating a non-random portion of your sample).

Conclusion

For these reasons, I see merit in retaining your 'suspicious' participants*. Keeping them in our analyses makes our data more noisy but less biased, and removes an important source of measurement error and researcher degrees-of-freedom.

*Within reason. If every participant reveals your hypotheses back to you before you've had a chance to debrief them, your study needs to be re-worked to reduce such suspicion. Thus, suspicion probes may be helpful to pilot and refine our procedures to ensure a suspicion rate below a given threshold (e.g., 5% of participants report suspicion).

Even when experimenters intentionally and covertly delivered deception-related information about the study to participants, these participants didn't reveal that information during a subsequent suspicion probe. And this finding is repeatedly replicated even when participants are rewarded for reporting their suspicions. This suggests that suspicion probes do not reliably detect suspicion even when it does exist and are thus, not valid.

Trained experimenters are also unable to reliably distinguish between participants who have and have not received prior information about the deceptive elements of the study, another piece of evidence that suspicion probes are invalid.

Such probes often rely on qualitative responses to experimenter interview questions, which can introduce experimenter biases into the procedure, as the experimenter's subjective interpretation of a qualitative response will impact their ultimate determination of whether a participant was suspicious or not.

Absent a standardized protocol, experimenters can also ask questions in different ways that lead participants towards a given response, which is another form of potential bias. Further, participants may express suspicion due to other motivations. They may be trying to avoid seeming like rubes who were fooled by the experimenters or are looking to cause problems for the people who just put them through a boring and/or distressing experiment.

Without valid and standardized probing procedures, the categorization of participants into 'suspicious' and 'non-suspicious' categories may not reflect a meaningful distinction that maps onto the intended construct. Even if they are valid, probes will always entail some degree of measurement error, which can have negative consequences for trying to accurately and reliably assess suspicion. However, even if such standardization and validation of suspicion probes takes place, it still leaves uncertainty regarding what to with 'suspicious' participants.

Should 'Suspicious Participants' Be Excluded From Data Analysis?

'Suspicious' participants are often excluded from data analyses, either by casewise deletion or statistical covariance. This practice is motivated by the assumption that suspicious participants provide inaccurate data about the study's hypotheses. But to what extent is this assumption based in fact?

Let's take a look with a specific case-study: studies that simulate real people with computerized avatars. Many studies tell participants that they are interacting with real people on a given task, when in fact they are interacting with pre-programmed computer avatars. Participants are often excluded if they indicate that they were suspicious during the study. However, studies suggest that people interact with avatars that they know are computer programs in similar ways to avatars they believe are actual people. So this is one case where the fears of 'contaminating' the analyses with the inclusion of suspicious participants wouldn't be warranted.

Even if participants are aware of your deception, there isn't any evidence (that I could find) that establishes a systematic way in which *including* such 'suspicious' participants would bias your results. Indeed, demand characteristics and the suspicion thereof (however it is defined) will likely have heterogeneous impacts on your different participants. Some participants may respond by trying to 'help' the experimenters and confirm the predictions they have guessed, some may actively seek to undermine the study, and still others may respond in random or erratic ways having lost faith that this study is a worthwhile endeavor. As such, this source of noise/error in your data should be roughly similar in structure to that introduced by participants exhibiting varying levels of understanding the experimenter's instructions, or actively engaging with and attending to the research task at hand. Investigators rarely screen for and remove participants for these variables, so why be so selective regarding suspicion?

Conversely, *excluding* your suspicious participants is likely to bias your resulting findings in meaningful ways. Recently, researchers have shown that excluding participants who fail attention checks eliminates non-random groups of participants from datasets, which undermines the validity and generalizability of the results. The same goes for suspicion probes, which likely eliminate individuals who tend to be more generally-suspicious, analytic, and intelligent. Thus, excluding 'suspicious' participants trades one problem (including participants who guessed your deception) with another one (eliminating a non-random portion of your sample).

Conclusion

For these reasons, I see merit in retaining your 'suspicious' participants*. Keeping them in our analyses makes our data more noisy but less biased, and removes an important source of measurement error and researcher degrees-of-freedom.

*Within reason. If every participant reveals your hypotheses back to you before you've had a chance to debrief them, your study needs to be re-worked to reduce such suspicion. Thus, suspicion probes may be helpful to pilot and refine our procedures to ensure a suspicion rate below a given threshold (e.g., 5% of participants report suspicion).

RSS Feed

RSS Feed